This is one in a series of Labs blogposts exploring the inhouse built technologies and tools that enable everything you see in our galleries.

Our galleries and Pen experience are driven by the idea that everything a visitor can see or do in the museum itself should be accessible later on.

Part of getting the collections site and API (which drives all the interfaces in the galleries designed by Local Projects) ready for reopening has involved the gathering and, in some cases, generation of data to display with our exhibits and on our new interactive tables. In the coming weeks, I’ll be playing blogger catch-up and will write about these new features. Today, I’ll start with videos.

Besides the dozens videos produced in-house by Katie – such as the amazing Design Dictionary series – we have other videos relating to people, objects and exhibitions in the museum. Currently, these are all streamed on our YouTube channel. While this made hosting much easier, it meant that videos were not easily related to the rest of our collection and therefore much harder to find. In the past, there were also many videos that we simply didn’t have the rights to show after their related exhibition had ended, and all the research and work that went into producing the video was lost to anyone who missed it in the gallery. A large part of this effort was ensuring that we have the rights to keep these videos public, and so we are immensely grateful to Matthew Kennedy, who handles all our image rights, for doing that hard work.

A few months ago, we began the process of adding videos and their metadata in to our collections website and API. As a result, when you take a look at our page for Tokujin Yoshioka’s Honey-Pop chair , below the object metadata, you can see its related video in which our curators and conservators discuss its unique qualities. Similarly, when you visit our page for our former director, the late Bill Moggridge, you can see two videos featuring him, which in turn link to their own exhibitions and objects. Or, if you’d prefer, you can just see all of our videos here.

In addition to its inclusion in the website, video data is also now available over our API. When calling an API method for an object, person or exhibition from our collection, paths to the various video sizes, formats and subtitle files are returned. Here’s an example response for one of Bill’s two videos:

{

"id": "68764297",

"youtube_url": "www.youtube.com/watch?v=DAHHSS_WgfI",

"title": "Bill Moggridge on Interaction Design",

"description": "Bill Moggridge, industrial designer and co-founder of IDEO, talks about the advent of interaction design.",

"formats": {

"mp4": {

"1080": "https://s3.amazonaws.com/videos.collection.cooperhewitt.org/DIGVID0059_1080.mp4",

"1080_subtitled": "https://s3.amazonaws.com/videos.collection.cooperhewitt.org/DIGVID0059_1080_s.mp4",

"720": "https://s3.amazonaws.com/videos.collection.cooperhewitt.org/DIGVID0059_720.mp4",

"720_subtitled": "https://s3.amazonaws.com/videos.collection.cooperhewitt.org/DIGVID0059_720_s.mp4"

}

},

"srt": "https://s3.amazonaws.com/videos.collection.cooperhewitt.org/DIGVID0059.srt"

}

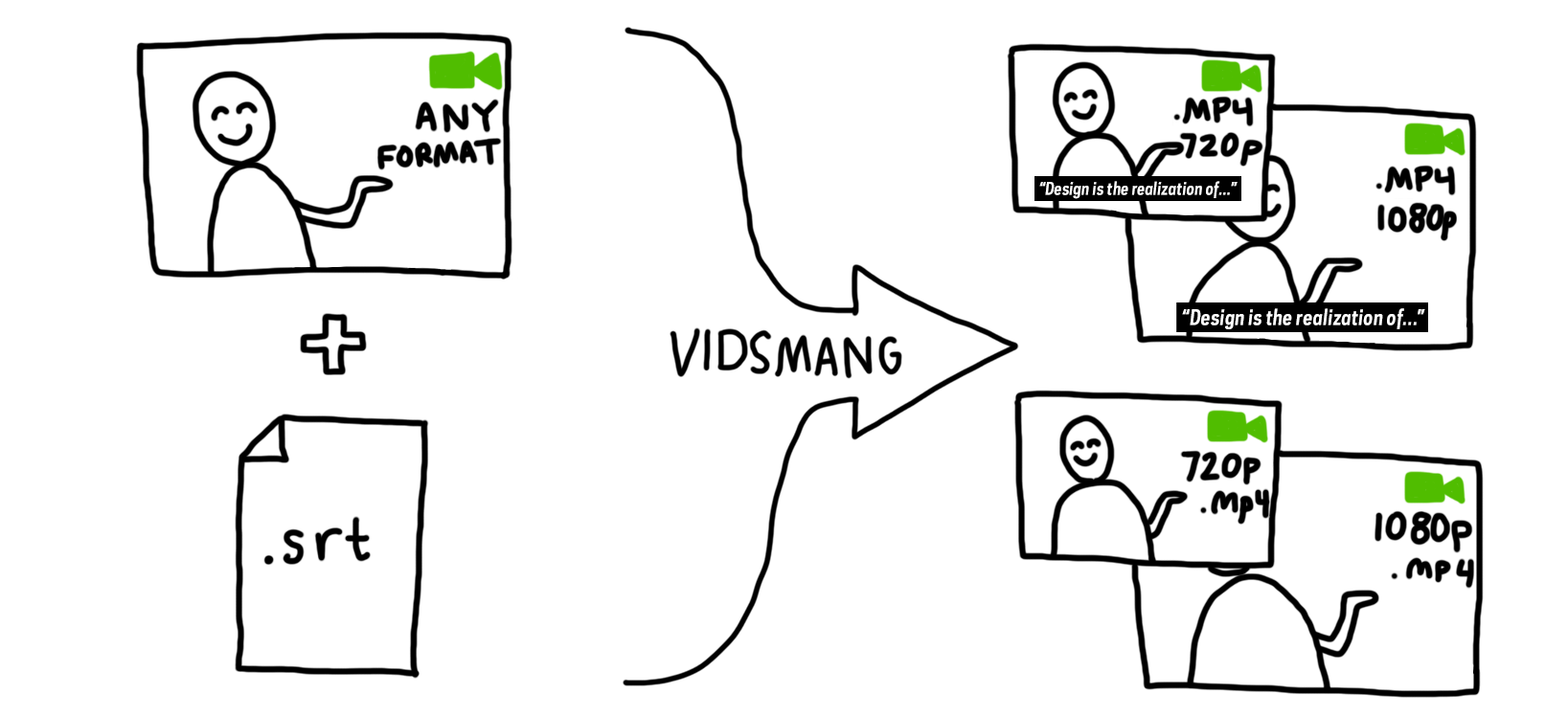

The first step in accomplishing this was to process the videos into all the formats we would need. To facilitate this task, I built VidSmanger, which processes source videos of multiple sizes and formats into consistent, predictable derivative versions. At its core, VidSmanger is a wrapper around ffmpeg, an open-source multimedia encoding program. As its input, VidSmanger takes a folder of source videos and, optionally, a folder of SRT subtitle files. It outputs various sizes (currently 1280×720 and 1920×1080), various formats (currently only mp4, though any ffmpeg-supported codec will work), and will bake-in subtitles for in-gallery display. It gives all of these derivative versions predictable names that we will use when constructing the API response.

Because VidSmanger is a shell script composed mostly of simple command line commands, it is easily augmented. We hope to add animated gif generation for our thumbnail images and automatic S3 uploading into the process soon. Here’s a proof-of-concept gif generated over the command line using these instructions. We could easily add the appropriate commands into VidSmanger so these get made for every video.

For now, VidSmanger is open-source and available on our GitHub page! To use it, first clone the repo and the run:

./bin/init.sh

This will initialize the folder structure and install any dependencies (homebrew and ffmpeg). Then add all your videos to the source-to-encode folder and run:

./bin/encode.sh

Now you’re smanging!

Since you are hosting the video on s3 was there any reason not to just use their ElasticTranscoder service (or one of the alternatives)?

Truthfully, I didn’t even know that this existed! So thanks for

letting me know. Vidsmanger served its purpose in the short-term but I

will take a look at integrating ElasticTranscoder in the future.

Cool – We’re also using some services for on the fly image resizing (Imgix in our case but there are a bunch of them including some open source ones). Also look at CloudFront for serving the files if speed is of concern to your users. It made a ton of difference with My Tours.

Pingback: On Exhibitions and Iterations | Cooper Hewitt Labs